Random Forest (ID3 algorithm)

Random Forest is a machine-learning algorithm that builds many decision trees and then combines their results to make a better final prediction.

It reduces overfitting and increases accuracy by taking the “majority vote” (classification) or “average” (regression) from all trees

Numerical For Constructing Random Forest

| Day | Outlook | Temp | Humidity | Wind | Can Play |

| D1 | Sunny | Hot | High | Weak | No |

| D2 | Sunny | Hot | High | Strong | No |

| D3 | Overcast | Mild | High | Weak | Yes |

| D4 | Rain | Cool | High | Weak | Yes |

| D5 | Rain | Cool | Normal | Weak | Yes |

| D6 | Rain | Cool | Normal | Strong | No |

| D7 | Overcast | Cool | Normal | Strong | Yes |

| D8 | Sunny | Mild | High | Weak | No |

| D9 | Sunny | Cool | Normal | Weak | Yes |

| D10 | Rain | Mild | Normal | Weak | Yes |

| D11 | Sunny | Mild | Normal | Strong | Yes |

| D12 | Overcast | Mild | High | Strong | Yes |

| D13 | Overcast | Hot | Normal | Weak | Yes |

| D14 | Rain | Mild | High | Strong | No |

Find the Class for the unseen data point

| Outlook | Temp | Humidity | Wind |

| Overcast | Mild | Normal | Weak |

Model 1

| Day | Outlook | Temp | Humidity | Wind | Can Play |

| D1 | Sunny | Hot | High | Weak | No |

| D2 | Sunny | Hot | High | Strong | No |

| D3 | Overcast | Mild | High | Weak | Yes |

| D4 | Rain | Cool | High | Weak | Yes |

| D5 | Rain | Cool | Normal | Weak | No |

| D6 | Rain | Cool | Normal | Strong | Yes |

| D7 | Overcast | Cool | Normal | Strong | Yes |

| D8 | Sunny | Mild | High | Weak | No |

| D9 | Sunny | Cool | Normal | Weak | Yes |

| D10 | Rain | Mild | Normal | Weak | Yes |

Set (S)

Calculate information gain for all attributes.

Attribute 1 OUTLOOK

- Sunny [No, No, No, Yes]

E =

E = 0.811 - Overcast [yes, yes]

E = 0 pure - Rain [Yes, Yes, Yes, No]

E = 0.811

Gain(S, outlook)

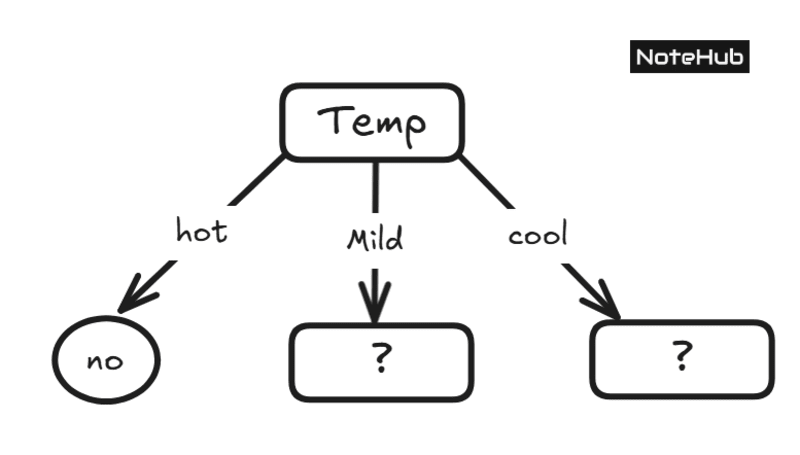

Attribute 2 Temp

| Hot | [No, NO] | E = 0 |

| Mild | [Yes, Yes, No] | E = 0.918 |

| Cool | [Yes, Yes, Yes, Yes, Yes, No] | E = 0.722 |

Gain(S, Temp)

Attribute 3 Humidity

| High | [No, No, No, Yes, Yes] | E = 0.9711 |

| Normal | [No, Yes, Yes, Yes, Yes] | E = 0.722 |

Gain(S, Humidity)

Attribute 4 Wind

| Weak | [No, No, Yes, Yes, Yes, Yes, Yes] | E = 0.9711 |

| Strong | [No, No, Yes] | E = 0.722 |

Gain(S, Wind)

Temp has Max gain

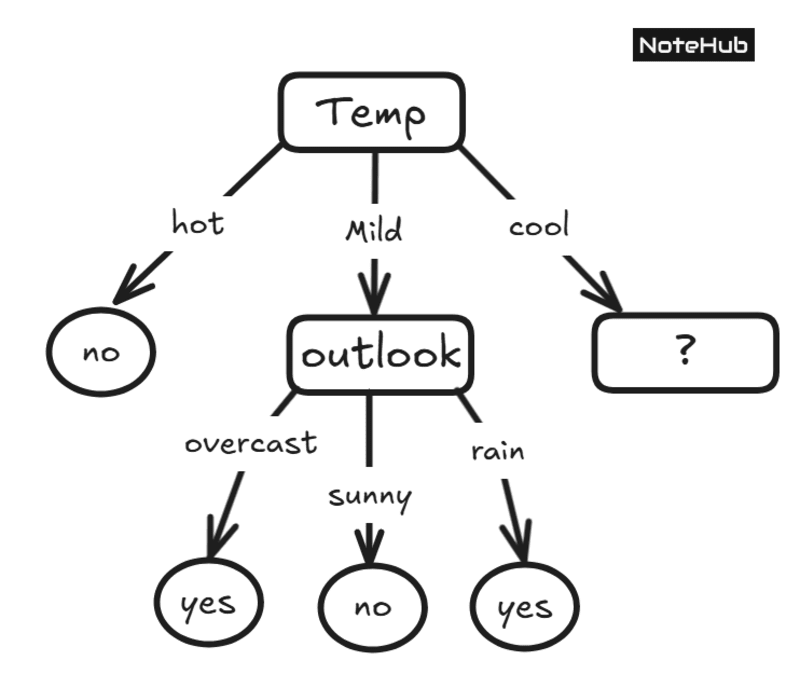

Branch Temp - mild ->

Set (S1)

| Outlook | Temp | Humidity | Wind | Can Play |

| Overcast | Mild | High | Weak | Yes |

| Sunny | Mild | High | Weak | No |

| Rain | Mild | Normal | Weak | Yes |

Attribute 1 OUTLOOK

| Overcast E = 0 (pure)sunny E = 0 (pure)Rain E = 0 (pure) | Gain = 0.918 |

Attribute 2 Humidity

| High E = 1Normal E = 0 | Gain = Gain = 0.251 |

Attribute 3 Wind

Gain = 0

Wind has only one value, which is "weak"; thus, gain will be 0

Outlook has max gain

Branch Temp - Cool ->

Set (S2)

| Outlook | Humidity | Wind | Can Play |

| Rain | High | Weak | Yes |

| Rain | Normal | Weak | Yes |

| Rain | Normal | Strong | No |

| Overcast | Normal | Strong | Yes |

| Sunny | Normal | Weak | Yes |

Attribute 1 OUTLOOK

| Overcast E = 0 (pure)sunny E = 0 (pure)Rain E = 0.918 | Gain = Gain = 0.1712 |

Attribute 2 Humidity

| High E = 0Normal E = 0 | Gain = Gain = 0.251 |