Natural Language Processing (NLP)

NLP and Text Processing

NLP stands at the intersection of linguistics, computer science, and AI. It enables machines to understand, interpret, and respond to human language.

NLP applications range from chatbots and language translation to sentiment analysis and content generation. It uses computational techniques for processing and analyzing human language.

Combining Linguistics

It combines linguistic theory and computational methods. It involves developing algorithms for automatic language analysis, processing, and generation.

This field bridges the gap between language and machines, enabling meaningful interaction.

NLP is primarily used on text-based datasets.

NLP Uses and Examples

NLP is primarily used on text-based datasets.

Examples:

- Chatbots

- Email filters

- Sentiment analysis

- Machine translation

Text Processing Concepts

1. Tokenization:

Tokenization is the process of splitting a sentence or string into smaller units called tokens.

- A token is the smallest unit of a sentence.

- Example:

- Sentence: “Ram is a good boy”

- Word tokens: Ram, is, a, good, boy

- Character tokens: R, a, m, i, s, …

2. N-gram Tokenization:

- Splits text into fixed-size chunks of N tokens.

- Example (bi-gram): "Ram is", "is a", "a man"

3. Stop Words:

- Words with little or no meaning in a sentence are called stop words.

- Common examples: the, is, in, over

- Removing stop words helps in:

- Text classification

- Information retrieval

- Search engine keyword extraction

Example:

- Sentence: “The quick brown fox jumps over the lazy dog”

- Stop words: the, over

- Content words: quick, brown, fox, jumps, lazy, dog

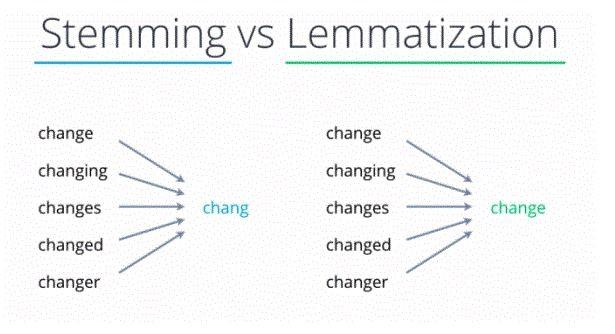

4. Lemmatization

Lemmatization performs morphological analysis.

It is the process of grouping together different inflected forms of a word so they can be analyzed as a single item (lemma).

Applications:

- Used in compact indexing

- Used in comprehensive retrieval systems, such as search engines

Advantages:

- Helps focus on meaningful words rather than different word forms

Disadvantages:

- Can be slow for large datasets

5. Stemming

- Definition: Stemming is the process of reducing words to their root form.

- Example:

finally → final, finalize → fina - Advantages: Fast and simple

- Disadvantages: Removes meaning sometimes; may produce non-words

- Purpose: Reduces words to a common base form for easier processing.

Bag of Words (BoW)

- NLP data (text) needs to be converted into numerical form for machine learning.

- Bag of Words Model:

- Converts text (sentence, paragraph, document) into a collection of words

- Ignores word order and grammar

- Focuses on word frequency

- Applications: Text classification, sentiment analysis, clustering

Example:

Text:

"He's a good boy. She is a good girl. Boys and girls are good."

Vocabulary Count:

| Word | Count |

| good | 3 |

| boy | 2 |

| girl | 2 |

BoW Representation (f₁=good, f₂=boy, f₃=girl):

| good | boy | girl |

| 1 | 1 | 0 |

| 1 | 0 | 1 |

| 1 | 1 | 1 |

N-Gram

- Definition: An N-gram is a contiguous sequence of N items (words or characters) from text.

"This is a sentence" - Types:

- Unigram: Single words

"This", "is", "a", "sentence" - Bigram: Two consecutive words

"This is", "is a", "a sentence" - Trigram: Three consecutive words

"This is a", "is a sentence"

- Unigram: Single words

- Uses: Captures context, improves language models, enhances text prediction, information retrieval