Regression

- linear regression

- polynomial regression

- logistic regression

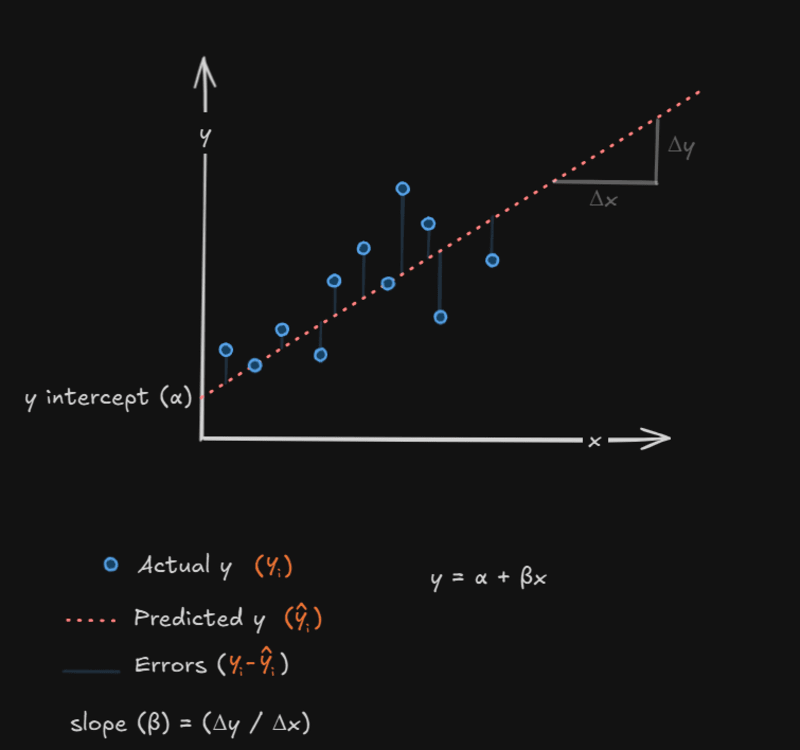

Linear regression is a method in machine learning where we fit a straight line to a set of data points that appear to be concentrated around the line. This model helps to predict the outcome for future values.

Equation of line:

- where is scaler used to transform the line.

- and (coefficient of x) is slope of the line

| (Input) | . | . | ||||

| (Outcome) | . | . |

In the figure, n data points are plotted. We are trying to fit the line to this set of discrete data points.

- Given Data:

- (Actual Data): The value of the output variable where , as provided by the data set.

- Line Equation:

- (Predicted Data): The value of the outcome as predicted by the line , corresponding to .

- Error Calculation:

- Error: The difference between (actual data point) and (predicted value from the line).

- Square of Errors:

- Sum of Square of Errors:

Where:

Goal

Now, we are trying to find out those value of and for which is minimum.

Partial Derivative with respect to :

using the power rule:

- Differentiate with respect to

- Apply the Chain Rule:

- Differentiate the Inner Term:

- Put the inner term:

- Simplify:

Divide both sides by :

Partial Derivative with respect to :

- Differentiate the Error Function with respect to

- Apply the Chain Rule:

- Differentiate the Inner Term:

- Put the inner term:

- Simplify:

Divide both sides by :

Equation 1:

Equation 2: