KNN Classification / Classifier

Picture this: you’re at a crowded Indian wedding, trying to figure out which side of the family a guest belongs to. You don’t ask for their ID. you just look at who they’re sitting with. If they’re surrounded by the groom’s cousins cracking jokes, they’re probably part of the Barat. If they’re huddled with the aunts discussing the catering, they’re likely from the bride’s side. Judging someone by their immediate neighbors—is exactly how the K-Nearest Neighbors (KNN) algorithm works. It is perhaps the most "human" algorithm in Data Science, relying on the simple, intuition that similar things always stay close together.

Euclidean Distance

Euclidean distance in K-dimension

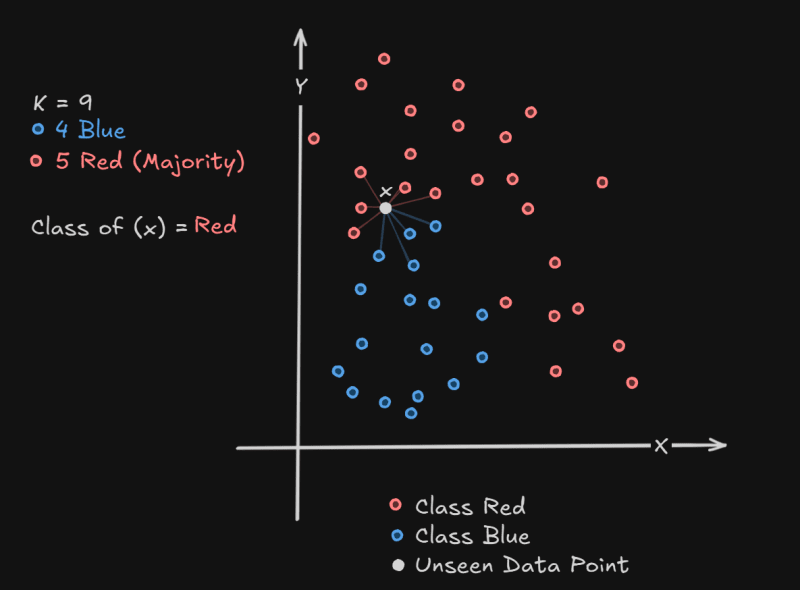

Setting K data points which are nearest to X where K is generally an odd number then checking the class of all those K data points which are in neighborhood of X and then use both of majority concept to decide the class of X this is called normal KNN classifier.

Let’s say we have the following data points:

| Point | X | Y | Class |

| A | 1 | 2 | 🟥 Red |

| B | 2 | 3 | 🟥 Red |

| C | 3 | 1 | 🟦 Blue |

| D | 6 | 5 | 🟥 Red |

| E | 7 | 7 | 🟥 Red |

| F | 8 | 6 | 🟥 Red |

| G | 3 | 4 | 🟦 Blue |

| H | 4 | 3 | 🟦 Blue |

| I | 5 | 2 | 🟦 Blue |

| J | 6 | 3 | 🟥 Red |

| X | 4 | 4 | ❓ Unknown |

Now we want to classify point X = (4, 4) using K = 5

📏 Step 1: Calculate Euclidean Distances from X(4,4)

| Point | Coordinates | Distance to X(4,4) | Class |

| A | (1,2) | 3.6 | 🟥 |

| B | (2,3) | 2.24 | 🟥 |

| C | (3,1) | 3.16 | 🟦 |

| D | (6,5) | 2.24 | 🟥 |

| E | (7,7) | 4.24 | 🟥 |

| F | (8,6) | 4.47 | 🟥 |

| G | (3,4) | 1.0 | 🟦 |

| H | (4,3) | 1.0 | 🟦 |

| I | (5,2) | 2.24 | 🟦 |

| J | (6,3) | 2.24 | 🟥 |

🔍 Step 2: Choose the K (5) Nearest Neighbors

Sorted by distance:

- G (1.0) 🟦

- H (1.0) 🟦

- B (2.24) 🟥

- D (2.24) 🟥

- I (2.24) 🟦

🧠 Step 3: Voting (Majority Class)

From 5 nearest neighbors:

- 🟥 Red → 2 (B, D)

- 🟦 Blue → 3 (G, H, I)

✅ Majority = Blue, so

👉 X(4,4) is classified as 🟦 Blue

🎯 Weighted KNN Classifier

Unlike the normal KNN where all neighbors have equal vote, in Weighted KNN, closer neighbors have more influence in deciding the class of the new point.

💡 Weight is usually inverse of the distance

— smaller distance → higher weight.

🧪 Example: Weighted KNN

| Point | X | Y | Class |

| A | 2 | 3 | 🟥 Red |

| B | 3 | 2 | 🟦 Blue |

| C | 4 | 3 | 🟥 Red |

| D | 5 | 1 | 🟦 Blue |

| E | 3 | 5 | 🟥 Red |

| F | 5 | 4 | 🟦 Blue |

| x | 4 | 2 | ❓ Unknown |

👉 Classify point X = (4, 2) with K = 3

📏 Step 1: Compute Euclidean Distance to X(4,2)

| Point | Coordinates | Distance to X(4,2) | Class |

| A | (2,3) | 2.24 | 🟥 |

| B | (3,2) | 1.0 | 🟦 |

| C | (4,3) | 1.0 | 🟥 |

| D | (5,1) | 1.41 | 🟦 |

| E | (3,5) | 3.16 | 🟥 |

| F | (5,4) | 2.24 | 🟦 |

Step 2: Pick K = 3 Nearest Neighbors

Sorted by distance:

- B (1.0) 🟦

- C (1.0) 🟥

- D (1.41) 🟦

Step 3: Assign Weights

| Point | Distance | Class | Weight |

| B | 1.0 | 🟦 | 1.0 |

| C | 1.0 | 🟥 | 1.0 |

| D | 1.41 | 🟦 | 0.5 |

Step 4: Weighted Voting

- 🟦 Blue:

1.00 (B) + 0.5 (D)= 1.50 - 🟥 Red:

1.00 (C)= 1.00

✅ Weighted majority = Blue

👉 So, X(4,2) is classified as 🟦 Blue