Algorithm Construct the decision tree using the algorithm for the given data set. S.no Age Competition Type Profit (Class) 1 Old Yes Soft Down 2 Old No Soft Down 3 Old No Hard Down 4 Mid Yes Soft Down 5 Mid Yes Hard Down 6 Mid No Hard Up 7 Mid No Soft Up 8 New Yes Soft Up 9 New No Hard Up 10 New No Soft Up

🔧 Step 1: Initial Entropy of Dataset E n t r o p y ( S ′ ) = − p U p l o g 2 ( p U p ) − p D o w n l o g 2 ( p D o w n ) Entropy(S^{\prime})=-p_{Up}log_2(p_{Up})-p_{Down}log_2(p_{Down}) E n t r o p y ( S ′ ) = − p U p l o g 2 ( p U p ) − p D o w n l o g 2 ( p D o w n ) Where:

Total = 10 Down = 5 (1 to 5)Up = 5 (6 to 10)So:

E n t r o p y ( S ′ ) = − 5 10 log 2 ( 5 10 ) − 5 10 log 2 ( 5 10 ) Entropy(S^{\prime})=-\frac{5}{10}\log_2\left(\frac{5}{10}\right)-\frac{5}{10}\log_2\left(\frac{5}{10}\right) E n t r o p y ( S ′ ) = − 10 5 log 2 ( 10 5 ) − 10 5 log 2 ( 10 5 ) = − 5 10 l o g 2 ( 1 2 ) − 5 10 l o g 2 ( 1 2 ) = -\frac{5}{10}log_2\left(\frac{1}{2}\right)-\frac{5}{10}log_2\left(\frac{1}{2}\right) = − 10 5 l o g 2 ( 2 1 ) − 10 5 l o g 2 ( 2 1 ) = − 5 10 l o g 2 ( 2 ) − 1 − 5 10 l o g 2 ( 2 ) − 1 = -\frac{5}{10}log_2\left(2\right)^{-1} - \frac{5}{10}log_2\left(2\right)^{-1} = − 10 5 l o g 2 ( 2 ) − 1 − 10 5 l o g 2 ( 2 ) − 1 = 5 10 l o g 2 ( 2 ) + 5 10 l o g 2 ( 2 ) =\frac{5}{10}log_2\left(2\right)+\frac{5}{10}log_2\left(2\right) = 10 5 l o g 2 ( 2 ) + 10 5 l o g 2 ( 2 ) = 5 10 ⋅ 1 + 5 10 ⋅ 1 =\frac{5}{10} \cdot 1 + \frac{5}{10} \cdot 1 = 10 5 ⋅ 1 + 10 5 ⋅ 1 ✅ Entropy() = 1

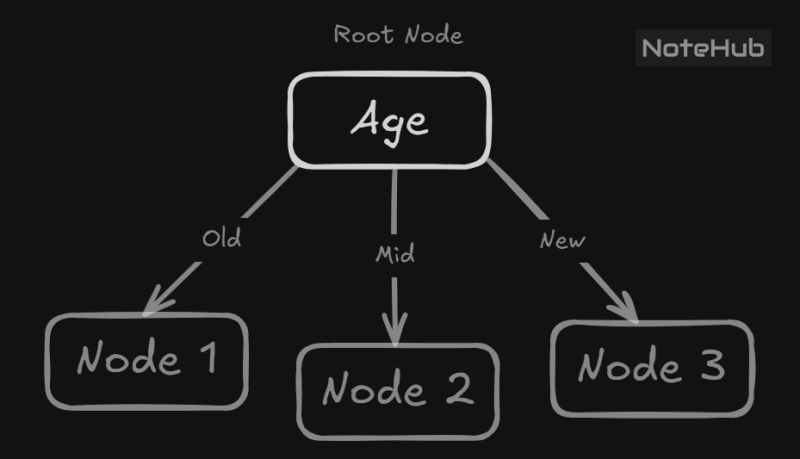

Attribute 1: Age (Old, Mid, New)

Old: = [Down, Down, Down]

E = − 3 3 log 2 3 3 E=-\frac{3}{3}\log_2\frac{3}{3} E = − 3 3 log 2 3 3

Mid : = [Down, Down, Up, Up]

= − 2 4 l o g 2 2 4 − 2 4 l o g 2 2 4 =-\frac{2}{4}log_2\frac{2}{4}-\frac{2}{4}log_2\frac{2}{4} = − 4 2 l o g 2 4 2 − 4 2 l o g 2 4 2 E = − 0.5 l o g 2 0.5 − 0.5 l o g 2 0.5 E = -0.5log_{2}0.5 - 0.5log_{2}0.5 E = − 0.5 l o g 2 0.5 − 0.5 l o g 2 0.5

New: = [Up, Up, Up]

E = − 3 3 l o g 2 3 3 E=-\frac{3}{3}log_2\frac{3}{3} E = − 3 3 l o g 2 3 3

= Entropy ( S ′ ) − ∣ S o l d ∣ ∣ S ∣ ⋅ Entropy ( S o l d ) − ∣ S m i d ∣ ∣ S ∣ ⋅ Entropy ( S m i d ) − ∣ S n e w ∣ ∣ S ∣ ⋅ Entropy ( S n e w ) =\text{Entropy}(S^{\prime})-\frac{|S_{old}|}{|S|}\cdot\text{Entropy}(S_{old})\\-\frac{|S_{mid}|}{|S|}\cdot\text{Entropy}(S_{mid})-\frac{|S_{new}|}{|S|}\cdot\text{Entropy}(S_{new}) = Entropy ( S ′ ) − ∣ S ∣ ∣ S o l d ∣ ⋅ Entropy ( S o l d ) − ∣ S ∣ ∣ S mi d ∣ ⋅ Entropy ( S mi d ) − ∣ S ∣ ∣ S n e w ∣ ⋅ Entropy ( S n e w ) = 1 − ( 3 10 ⋅ 0 + 4 10 ⋅ 1 + 3 10 ⋅ 0 ) = 1 - \left(\frac{3}{10}\cdot0 + \frac{4}{10} \cdot 1 + \frac{3}{10}\cdot 0\right) = 1 − ( 10 3 ⋅ 0 + 10 4 ⋅ 1 + 10 3 ⋅ 0 ) 1 − 0.4 = 0.6 1 - 0.4 = 0.6 1 − 0.4 = 0.6 ✅ Gain(Age) = 0.6

Attribute 2: Competition (Yes, No)

Yes : = [Down, Down, Down, Up]

= − 3 4 l o g 2 3 4 − 1 4 l o g 2 1 4 =-\frac{3}{4}log_2\frac{3}{4}-\frac{1}{4}log_2\frac{1}{4} = − 4 3 l o g 2 4 3 − 4 1 l o g 2 4 1 E = − 0.75 l o g 2 0.75 − 0.25 l o g 2 0.25 E = -0.75log_{2}0.75 - 0.25log_{2}0.25 E = − 0.75 l o g 2 0.75 − 0.25 l o g 2 0.25

No: = [Down, Down, Up, Up, Up, Up]

= − 2 6 l o g 2 2 6 − 4 6 l o g 2 4 6 =-\frac{2}{6}log_2\frac{2}{6}-\frac{4}{6}log_2\frac{4}{6} = − 6 2 l o g 2 6 2 − 6 4 l o g 2 6 4 E = − 0.333 l o g 2 0.333 − 0.666 l o g 2 0.666 E = -0.333log_{2}0.333 - 0.666log_{2}0.666 E = − 0.333 l o g 2 0.333 − 0.666 l o g 2 0.666 E ≈ 0.918 E \approx 0.918 E ≈ 0.918

= 1 − ( 4 10 ⋅ 0.811 + 6 10 ⋅ 0.918 ) =1-\left(\frac{4}{10}\cdot0.811+\frac{6}{10}\cdot0.918\right) = 1 − ( 10 4 ⋅ 0.811 + 10 6 ⋅ 0.918 ) ≈ 1 − ( 0.3244 + 0.5508 ) = 0.1248 \approx 1 - (0.3244 + 0.5508) = 0.1248 ≈ 1 − ( 0.3244 + 0.5508 ) = 0.1248 ✅ Gain(Competition) = 0.125

Attribute 3: Type (Soft, Hard)

Soft: = [Down, Down, Down, Up, Up, Up]

E = − 3 6 l o g 2 3 6 − 3 6 l o g 2 3 6 E=-\frac{3}{6}log_2\frac{3}{6}-\frac{3}{6}log_2\frac{3}{6} E = − 6 3 l o g 2 6 3 − 6 3 l o g 2 6 3 E = − 1 2 log 2 1 2 − 1 2 log 2 1 2 E = -\frac{1}{2}\log_{2}\frac{1}{2} -\frac{1}{2}\log_{2}\frac{1}{2} E = − 2 1 log 2 2 1 − 2 1 log 2 2 1 E = − 0.5 log 2 2 − 1 − 0.5 log 2 2 − 1 E = -0.5\log_{2}2^{-1} -0.5\log_{2}2^{-1} E = − 0.5 log 2 2 − 1 − 0.5 log 2 2 − 1 E = 0.5 ⋅ 1 + 0.5 ⋅ 1 E = 0.5 \cdot 1 + 0.5 \cdot 1 E = 0.5 ⋅ 1 + 0.5 ⋅ 1

Hard : = [Down, Down, Up, Up]

E = − 2 4 l o g 2 2 4 − 2 4 l o g 2 2 4 E=-\frac{2}{4}log_2\frac{2}{4}-\frac{2}{4}log_2\frac{2}{4} E = − 4 2 l o g 2 4 2 − 4 2 l o g 2 4 2 E = − 1 2 log 2 1 2 − 1 2 log 2 1 2 E=-\frac{1}{2}\log_2\frac{1}{2}-\frac{1}{2}\log_2\frac{1}{2} E = − 2 1 log 2 2 1 − 2 1 log 2 2 1 E = − 0.5 log 2 2 − 1 − 0.5 log 2 2 − 1 E=-0.5\log_22^{-1}-0.5\log_22^{-1} E = − 0.5 log 2 2 − 1 − 0.5 log 2 2 − 1 E = 0.5 ⋅ 1 + 0.5 ⋅ 1 E = 0.5 \cdot 1 + 0.5 \cdot 1 E = 0.5 ⋅ 1 + 0.5 ⋅ 1

= 1 − ( 6 10 ⋅ 1 + 4 10 ⋅ 1 ) =1-\left(\frac{6}{10}\cdot1+\frac{4}{10}\cdot1\right) = 1 − ( 10 6 ⋅ 1 + 10 4 ⋅ 1 ) = 1 − ( 0.6 + 0.4 ) = 0 =1 - (0.6 + 0.4) = 0 = 1 − ( 0.6 + 0.4 ) = 0 ✅ Gain(Type) = 0

Find the maximum Gain ✅ Gain(Age) = 0.6

✅ Gain(Competition) = 0.125

✅ Gain(Type) = 0

max ( 0.6 , 0.125 , 0 ) = 0.6 \text{max}\left(0.6,0.125,0\right)=0.6 max ( 0.6 , 0.125 , 0 ) = 0.6 Age gives the highest gain (0.6) .

Thus, "Age" will be placed at the root of the Decision Tree.

, a subset of for which Age = Old

Age Competition Type Profit (Class) Old Yes Soft Down Old No Soft Down Old No Hard Down

Observation:

All 3 examples → Profit = Down thus

E n t r o p y ( S 1 ) = 0 Entropy(S^1)=0 E n t r o p y ( S 1 ) = 0 Leaf Node = "Down"

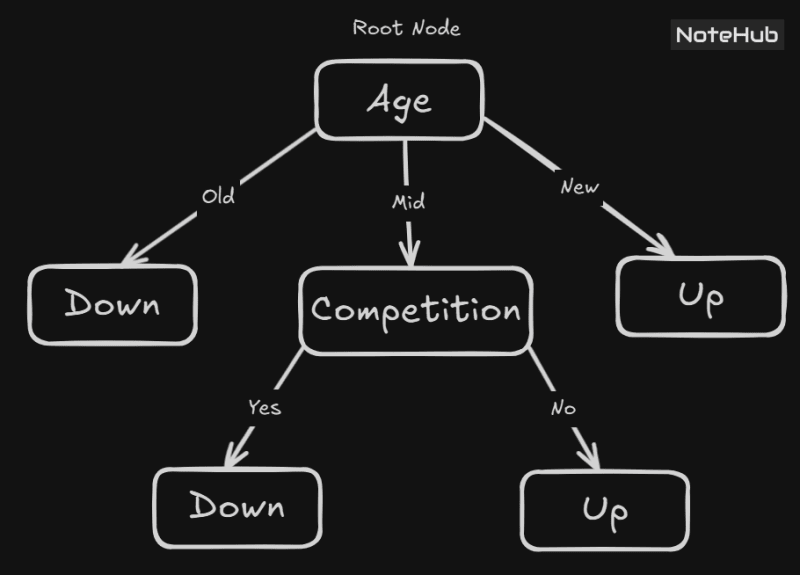

a subset of for which Age = Mid

Age Competition Type Profit (Class) Mid Yes Soft Down Mid Yes Hard Down Mid No Hard Up Mid No Soft Up

Observation:

2 examples → Down 2 examples → Up E n t r o p y ( S 2 ) = − 2 4 l o g 2 2 4 − 2 4 l o g 2 2 4 Entropy\left(S^2\right)=-\frac{2}{4}log_2\frac{2}{4}-\frac{2}{4}log_2\frac{2}{4} E n t r o p y ( S 2 ) = − 4 2 l o g 2 4 2 − 4 2 l o g 2 4 2

✅ Mid group is impure (entropy = 1).

🔷Attribute 1: Competition(Yes, No)

Yes: = [Down, Down]

E n t r o p y ( Yes ) = 0 Entropy(\text{Yes})=0 E n t r o p y ( Yes ) = 0

No: = [Up, Up]

E n t r o p y ( No ) = 0 Entropy(\text{No})=0 E n t r o p y ( No ) = 0

= 1 − ( 2 4 ⋅ 0 + 2 4 ⋅ 0 ) =1-\left(\frac{2}{4}\cdot0+\frac{2}{4}\cdot0\right) = 1 − ( 4 2 ⋅ 0 + 4 2 ⋅ 0 ) ✅ Gain(Competition) = 1

🔷Attribute 2: Type(Soft, Hard)

Soft: = [Down, Up]

− 1 2 l o g 2 1 2 − 1 2 l o g 2 1 2 -\frac{1}{2}log_2\frac{1}{2}-\frac{1}{2}log_2\frac{1}{2} − 2 1 l o g 2 2 1 − 2 1 l o g 2 2 1

Hard : = [Down, Up]

− 1 2 l o g 2 1 2 − 1 2 l o g 2 1 2 -\frac{1}{2}log_2\frac{1}{2}-\frac{1}{2}log_2\frac{1}{2} − 2 1 l o g 2 2 1 − 2 1 l o g 2 2 1

= 1 − ( 2 4 ⋅ 1 + 2 4 ⋅ 1 ) =1-\left(\frac{2}{4}\cdot1+\frac{2}{4}\cdot1\right) = 1 − ( 4 2 ⋅ 1 + 4 2 ⋅ 1 ) ✅ Gain(Type) = 1

Since the Gain is maximum for the attribute , putting the competition at Node 2

a subset of for which Age = New

Age Competition Type Profit (Class) New Yes Soft Up New No Hard Up New No Soft Up

All 3 examples → Profit = Up E n t r o p y ( N e w ) = 0 Entropy(New)=0 E n t r o p y ( N e w ) = 0 Leaf Node = "Up"

All the corresponding class labels are down in

E n t r o p y ( N e w ) = 0 Entropy(New)=0 E n t r o p y ( N e w ) = 0 Leaf Node = "Down"

All the corresponding class labels are up in

E n t r o p y ( N e w ) = 0 Entropy(New)=0 E n t r o p y ( N e w ) = 0 Leaf Node = "Up"